The utilization of community health workers (CHW) to deliver front line clinical and outreach services directly to the community in which they live is widely appreciated as necessary in low and middle income countries to reach international health outcome and coverage goals. 1-2 Likewise, community-based information systems (CBIS) and mobile applications, mHealth, have been increasingly developed and deployed to quantify and support the services delivered by CHW’s. 3, 4 Largely implemented by donors and NGOs, these community based tools have been observed as forming community data silos that rarely feed into the national health management information system (HMIS) operated by the Ministry of Health. 5 Ultimately, discordant and fragmented CHW reporting systems result in little institutional buy-in and low community data use. These are cited as key causes to why only 25% of mHealth and CBISs projects ultimately go to scale beyond 1000 users of the system. 6

Guenther et al. highlighted that hampering the potential of mHealth is the sheer volume, limited scale, and short lifespan of bespoke mHealth tools. In fact, in a single country there could be dozens of unconnected mHealth projects potentially deployed in the same communities. 7 As an example, in 2008 up to 80 different mHealth applications were being piloted in Uganda. None of these pilots were ultimately scaled, interoperable, or coordinated by the Ministry of Health. 8 Additionally, in 2011 a World Bank report quantified more than 500 mHealth pilot studies in which virtually nothing was known about uptake, efficacy, or effectiveness of these. 9

Mehl and Labrique claim that the cause of scale and sustainability of community reporting systems is largely due to a short-sighted approach, narrow perspective of mHealth implementers, and little government oversight. 10 Implementers are beholden to this approach from donor requirements which require rapid technology development, implementation, and results, where as a broad, integrated approach simply takes more time and money than what implementer are afforded. 11

“… Donors come in with ready strategies and a calculated plan for the funding and the cost for a project. What many fail to include in the strategies is the start-up period, were organizations review what possible obstacles exists for implementing the project, which is a process that can be more expensive and take longer time than the donors predicted. Also, donors tend to set a timeframe that works in their own countries but that needs to be adapted to Uganda.” – Namirembe, Eunice 12

The implementation of unconnected, non-interoperable mHealth systems result in community health information silos which do not feed into the national HMIS depriving government of the community data they desire. 7 Similarly, any data feedback to community-based leaders, groups, and stakeholders from siloed, vertical mHealth projects are similarly disconnected, despite the integrated and interdependent service provision found at community level. 13

The fragmented, unregulated, siloed landscape of community-based information systems and mHealth tools ultimately drove the Government of Uganda to implement a nationwide mHealth moratorium in 2012. 14-15 This seminal action has spurred on the increasingly acknowledgment from ministries of health and donors alike that representation of all community health programs and service data should be integrated into a single data repository, and mHealth and paper-based tools should interoperate with and between each other to supply this data. 7, 16-17 This concept of a single community data source is described here as a community health information system (CHIS) which is housed in the national health management information system (HMIS).

We use the definition of a CHIS as "a combination of paper, software, hardware, people and processes which seeks to support informed decision making and action taking of CHWs. This includes:

-

Recording of basic data such as population, health program transactions, case-based data, stock and resource availability,

-

Tracking and taking action on individual program-based needs such as disease surveillance, mortality and morbidity,

-

Reporting and feedback including routine upward reports, feedback reports, ad hoc reports and specific reports for different stakeholders". 18

The development of CHIS’s has become a focus for donors and many governments, but little is known about the current state, the technology in use, and the barriers to developing or improving CHIS’s. Moreover, the field of CHW utilization of mHealth tools is widely analyzed and researched in many contexts especially in relation to its role in CHW reporting and support. 6 Still, a more comprehensive picture and resultant research of an integrated CHIS is still largely absent in the literature.

To partially address these research gaps and assess the current state and predominant issues of the CHIS in countries a CHIS assessment tool was developed by the Health Data Collaborative 18 (HDC) sub-working group on CHIS. An assessment was subsequently carried out in 17 West and Central Africa countries; Benin, Burkina Faso, Cameroon, Congo, The Democratic Republic of the Congo, The Gambia, Ghana, Guinea Bissau, Ivory Coast, Liberia, Mali, Niger, Nigeria, Senegal, Sierra Leone, Chad, and Togo.

Based on the above described process, this paper seeks to address three research questions. First, what is the state community health information systems in West and Central Africa? Second, what are the major issues to address to improve community health information systems. Third, how can a self-assessment approach be suitable to find this out, and contribute to a process of CHIS improvement?

In what follows we describe the methodology of developing and conducting the CHIS self-assessment. Thereafter, we explore the quantitative results of the self-assessment followed by the qualitative feedback on the assessment process itself. We then discuss these results in relationship to the significance of these findings to development assistance for CHIS.

METHODS

The overall method for this study was the development and application of the CHIS assessment tool. This process broadly took place in three stages. First, the CHIS guidelines were produced by an international group, accompanied by the assessment tool to focus on the same themes as the guidelines. Second, UNICEF supported countries in West and Central Africa to conduct a self-assessment using the tool. Thirdly, the results were presented and discussed at a regional workshop, whereupon the data was analyzed by the authors of this paper.

Development of the assessment tool

The CHIS assessment tool is part of the “DHIS2 Community Health Information Systems Guidelines”, developed through 2017 as a deliverable to the Health Data Collaborative working group on Community Data. Taking the widely adopted Health Metrics Network HIS Assessment tool 19 as inspiration, the approach was to develop a quantitative questionnaire covering all aspects of CHIS, such as government ownership, financial and institutional sustainability, community engagement, development and use of technology, standard operating procedures, and standards for reporting. The full assessment tool, as well as guidance on how to use it, can be found in the CHIS guidelines. 18

The assessment tool consists of 58 questions, each to be scored between 3 (best) and 0. For the corresponding four possible scores, representing Highly adequate, Adequate, Present but not adequate, and Not adequate at all, a situational description was added to guide users to score their own system (Table 1). These descriptions are necessarily normative, laying out a “gold standard” for the highest score. The classification of various situations as adequate or not reflects the tool developers’ normative judgement, rather than the situation in most countries. These were known limitations of the tool from the start.

The assessment questions are grouped into six assessment ‘themes,’ government ownership, community engagement, reporting structure, standard operating procedures, system design and development, and feedback.

Even if the assessment is done by a group of people in a country, the scores might not be precisely reflecting the situation. However, it is the exercise of doing the assessment as a group that is considered the real value as it facilitates an opportunity for direct interacting from stakeholders at all levels. Conducting the assessment in a group also helps control for an individual participant’s potential bias as the opportunities of individual response bias is unknown because the assessment was not piloted. It is from a benchmark perspective of assessments carried out in 17 countries this paper reports on. These were the countries of the 20 with a national community health program that responded to the call for the self-assessment from the UNICEF West and Central Africa Regional Office.

A qualitative assessment method was also described in the guidelines, focusing on detailed investigation at the micro level. However, this complementary method is not part of this paper.

Conducting self-assessments

The CHIS guidelines outline the process of conducting the assessment. First, a stakeholder identification exercise identified relevant participants or groups involved in the assessment (Table 2).

The process of conducting the assessment itself is not detailed, except that each question should be discussed in plenary, and an agreement should be reached regarding the appropriate score.

After the initial launch in June 2017, UNICEF’s West and Central Africa Regional Office (WCARO), based in Dakar, Senegal, initiated CHIS assessments in the countries in the region. To facilitate the convening of the participants, a small grant was provided to some countries, with support from The Global Fund. The countries, represented by the Ministry of Health or other relevant actors, conducted the assessment without any further guidance or participation from the Health Data Collaborative.

The countries conducted the assessment during a two-day workshop in the capital city with support from UNICEF. The UNICEF country office selected participants, organizing the workshop, and preparing a presentation of data flow to be discussed during the workshop. The workshops included 30 participants on average, involving representatives from the Ministry of Health and other relevant ministries, from medical regional and district levels and community level (CHWs, chief nurses of health posts, matrons, etc.), as well as partners involved in community health projects. The first day of the workshop was dedicated to responding to the CHIS assessment tool in plenary. It should be noted that in some countries the number of participants was too important to respond the questions in plenary and the exercise was therefore organized in working groups (each group representing as far as possible all categories of participants). In certain cases, all groups responded to all questions, and in other cases some groups answered some sections and other groups some others. In any instance, responses were presented and validated by the assembly at the end of the day. The second day was dedicated to analyzing the country community health data flow, synthesizing and restituting findings from both the two exercises, and establishing some recommendations to strengthen the CHIS – to be used as a basis to develop a roadmap.

The results from the assessments were presented in March 2018, where all countries participated in a CHIS Academy in Dakar.

Data collection and analysis

Primarily data collection occurred during the one-week CHIS workshop in March 2018, where all countries were present. Each shared their assessment spreadsheets and presented the main findings via a PowerPoint presentation (Microsoft Inc, Seattle, WA, USA). In the assessment spreadsheets, we also have access to any comments to individual assessment questions. As the CHIS assessment was not directly observed in all countries, qualitative data was also collected from the country presentations, as well as focused round-table style follow-up discussions with the workshop participants, who had all been part of the country assessments. Further, informal interviews were conducted during workshop with country representatives when the follow-up questions were needed to clarify points made during the country presentation of findings and the preceding round-table discussion.

The numerical scores from the assessments were ordered in a pivot table, allowing analysis across a range of filters and combinations. We were primarily interested in the following analysis:

-

Common strengths and weaknesses,

-

Variance across countries.

Data analysis of the qualitative data from discussions and interviews was carried out by identifying quotes on the process of the assessment. The main topics of interest were related to

-

The content of the assessment tool and how that informed the assessors,

-

Experiences with the broad participatory approach.

Ethical considerations

All data, assessment scores, and testimonies provided by the study participants were provided in full acknowledgement that the data may be shared broadly and published anomalously. To that point, we have aggregated and anonymized the quantitative assessment results, and direct quotes are attributed to country delegations and not an individual both during data capture as well as in publishing results. It should be appreciated that although a degree of collective agreement within the country delegations was required to conduct the self-assessment the results still represent an informed opinion and are not a direct observation of the authors of this paper.

This research is not subject to ethical review or notification by the Norwegian Centre for Research Data (NSD) because no directly or indirectly identifiable personal data was registered or published and the NSD guild lines for anonymous information were followed.

RESULTS

We here present two sets of data; the quantitative scores from the assessment spreadsheet, and corresponding qualitative data derived from the spreadsheets (for instance comments to individual questions) and the presentations and follow-up interviews from each country, as well as from the direct observation of self-assessment carried out in three countries.

Assessment scores

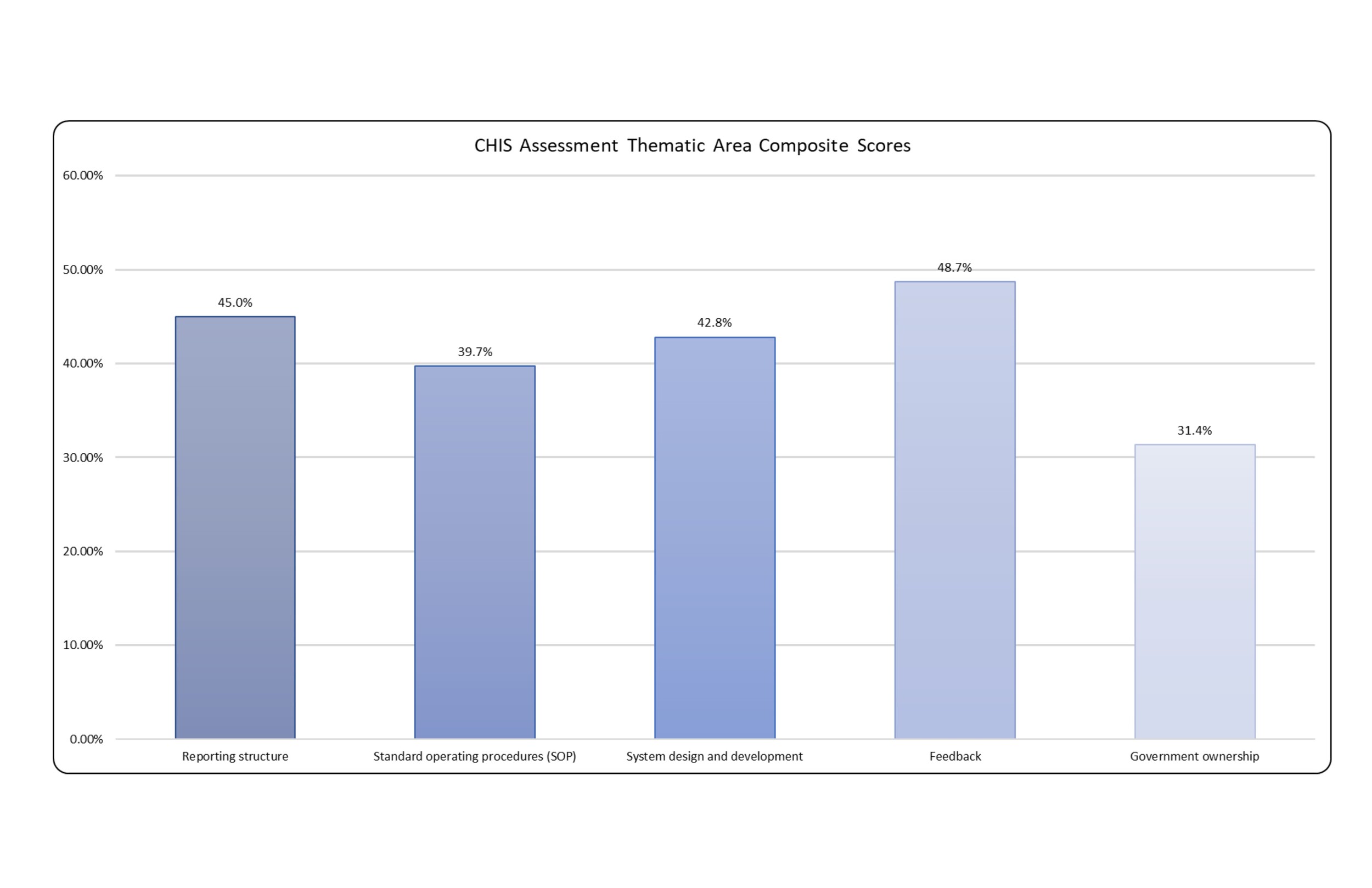

In this section we present the aggregated findings of the 17 country assessments. These have been anonymized and individual presentation of each country is beyond the scope of this paper. Below is the aggregated composite score for each of the six assessment areas. The score thus represents a percentage of the highest possible score for the 6 thematic areas as well as for all individual questions.

This score was calculated by:

Composite score (%) = (Total aggregated scores across all countries/Total available scores) x 100

Every country provided a numerological score (0-3) for all questions for a total of 986 recorded responses. The mean across all response is 1.2. The sample standard deviation across all responses is 1.2, and the sample variance across all responses is 1.3 (Table 3).

Looking at the thematic areas, the lowest scoring theme is government ownership and the second lowest theme is community engagement (Figure 1). Broadly, this indicates that ministries face major challenges in managing the CHIS and that still major limitations exist in engaging with community stakeholders themselves in CHIS development. The highest scoring assessment area is feedback The highest scoring question in the feedback section is, “Do CHWs supervisors provide regular feedback on reporting and data quality to the CHWs?” with a composite score of 78.4%. This score would suggest there does exist clear communication channels between CHW and their supervisors in most countries. Slightly anonymously one question did have a composite score of 19.6%. That question is, “Do CHWs get automatic feedback when reporting data?” Nine countries recorded a zero score indicating that CHWs neither receive manual or automatic notification of data quality checks and data submission, and most CHWs report issues or confusion in knowing if data has been submitted. One additional question also had a relatively high composite score of 78.4%, “Is CHW reporting integrated in one system, linked to national Health Information Management System (HMIS)?” With eleven three responses this suggest that most of the countries performing the assessment are capturing some form of CHW reporting into the national HMIS.

To identify additional noteworthy, self-reported barriers to CHIS development and implementation, we will now look more closely at the remaining questions that scored below 25%. The two lowest scoring questions are both 11.8% as their composite score. The first is, “Do traditional health providers report through the CHIS?” It did become clear during country feedback on the assessment that many countries do not have or do not consider traditional health providers to be part of the formal health system and therefore this question may not be applicable. The second is, “Is this a public webpage with relevant indicators on community health?” This question had thirteen countries recording a zero value, three one values, and only one country recorded a three. The zero-value response is, “No information is available for any community member beyond the CHW.” This clearly denotes that virtually no data is made available publicly to community stakeholders.

The second lowest scoring questions are both 13.7%. The first is, “Are phones, reliable electricity and network coverage, available for CHWs reporting?” The responses indicate that much of the CHW workforce still does not have access to the necessary infrastructure for mobile or mHealth tools. The second 13.7% scoring questions is, “What are the mechanisms for financing and topping up phone subscriptions or credits?” The results of this question indicate that in the vast majority of assessment counties CHW do not have the financial or free services to support submitting or receiving data electronically.

One question scored a 17.6%, “Is there a project budget to develop and launch the CHIS?” Eleven countries recorded a zero-response indicating that no budget exists. Two questions scored a 19.6%. The first is, “Is there an annual budget for supporting the CHIS?” This indicates that in the clear majority of countries either do not have a budget or the annual budget is not sufficient. The second 19.6% score is discussed in the preceding paragraph. One question scored a 21.6% with eight countries recording a zero. That question is, “Is there a long-term sustainability plan for the CHIS?” The scores of this question indicates that in most countries there is no plan for government ownership, or a plan does exist but is not widely distributed and seldom adhered to. Two questions scored a 23.5% with the first being, “Is there an established CHIS Technical Working Group (TWG) lead by ministry senior staff and including representation from key stakeholder groups?” The scores show that the majority of countries do not have a CHIS TWG or the TWG does not have clear leadership and is not able to influence the CHIS. The second questions with a 23.5% is, “Is the introduction and use of new technology supported by mechanisms for user guidance, troubleshooting, and replacement of technology and hardware over time?” This score shows that in very few countries are there adequate end user support to address technical issues or replace hardware.

The process of conducting the assessment

Direct observation

The self-assessment exercise has been observed in three countries, including both English-speaking and French-speaking ones. In this way, the understanding and acceptability of the tool could be tested in both its French and English version.

The exercise was unanimously seen as a unique opportunity to have all stakeholders discussing the CHIS together. Indeed, if chances are often limited for meetings at national level between community health representatives and health information system representatives, they are even more so between central level and CHWs. However, not all participants were comfortable speaking out and CHWs often had to be encouraged to share their views.

Obviously, the main difficulty of the exercise lies in being fair in choosing a response and making a consensus when it seems that none of the proposition fits exactly the country situation. However, as the exercise progressed, participants were more and more able to own the tool/exercise, adding specific comments where needed and taking into account that the opportunity to monitor future progresses is at least as important as the exact score of the day.

Regarding the tool itself, some misunderstandings of questions led to some editing - rephrasing/clarifying some questions, changing the order of some questions for better consistency, splitting questions that encompass two ideas at once (eg, “Are technical skills and hosting facilities available within the country?” has been proposed to be split in one question about technical skills and one about hosting facilities).

During the following week-long workshop in March 2018, all 17 countries that conducted the assessment presented their impressions of the assessment process and its resultant findings. Here we present only key takeaways from presented by the countries.

Insights from the assessment tool and process

Several participants gave positive feedback on the assessment tool and process. One positive aspect of the tool was the informative nature of the questions and various answer alternatives. It conveyed a “gold standard” in addition to a way to evaluate the system:

“[The assessment] was an eye opener and very good learning process so we can understand the needs of the CHW and put more work in and need to support the CHWs.” – Sierra Leone

“We can use the tool to have a community observatory. It [the assessment] is a very useful tool and the various tools explained are something that we can keep working on.” – DRC

The participants also pointed the process of conducting the assessment as positive, in that it brought together various stakeholders to inform each other:

“It enables us to bring all participants from all levels to see what was working what was not working and what we can improve.” – Benin

“The self-evaluation enabled us to evaluate various aspects of the CHIS including the stakeholders and enable us to improve the system.” – Mali

“It enabled us to bring together the whole system from the national to the community. Everyone in one room including the CHW that gave feedback on the tools and get the opinion of everyone involved.” – Cote D’Ivoire

Insights to community stakeholders and activities

A common experience communicated to us was that lead evaluators, often based at the national level, had surprisingly little knowledge about what was going on at the community level:

“When we conducted the evaluation, we did not have enough information on the community. It was like we did not know much about community interventions” – Cameroon

“The CHWs were invited and it was a surprise when we talked to them the idea of the answers we had to these questions. The community stakeholders had a different approach to inputs. The community would address much of the problems before they were informed at the central level.” – Togo

“In the feedback some of the activities were already done in the community and much of what is happening at the community does not arrive to the ministry of health and we thought that we need a relevant policy to reflect this.” – Chad

“Another surprise it showed that state ownership and community engagement was also zero.” - Chad

Insights into existing CHIS

Lastly, the tool and process were on overall deemed useful to assess the state of the CHIS:

“Before the evaluation we developed a lot of other things but we did not have any components to enhance or improve the system. We were just doing sight navigation but we had no idea how to improve it.” – Benin

“We did have collectors and we thought that we had very good indicators, but we realized that we were in very plenary phase. We also found ourselves with some SOPs, but we didn’t think that SOPs were very important. We had many SOPs already, but we did not know we had so many SOPS. We thought this would be an opportunity to use the tool at the annual review of the taskforce.” – Cameroon

“We tried to analyze the weaknesses and it is quite worrying and we didn’t have any documentation” – Guinea-Bissau

“The biggest surprise was with incentives for data submission. To get incentives was a big surprise for us. The surprising thing was the automated feedback mechanisms where community health workers could have real-time information was a surprise.” – Gambia

Limitations

The CHIS assessment process and questions themselves do have several limitations of note. First, as previously noted, inclusion of traditional healers in the assessment process was widely seen as inappropriate and unnecessary. Therefore, this question should be removed for future assessments. This assessment was also developed based upon the current appreciation of the ideal CHIS as outlined in the HDC DHIS2 CHIS Guidelines. This normative standard may not be appropriate in many country contexts. Typifying this is the broad differences in roles and responsibilities of CHWs. For example, this assessment assumes that CHWs are able to perform some clinical diagnosis and treatment. However, in India CHWs are not legally allowed to do this and can only refer patients to health facilities. 18

The CHIS assessment also has no considerations for gender in CHIS design, implementation, CHW roles, stakeholder engagement, and CHIS governance. It should be appreciated that certainly unforeseen and unintentional gender bias will be injected into the CHIS development and implementation unless specific considerations are made for gender inclusion and equity. Further iterations of the assessment tool and methodology should factor in questions and guidance to allow countries to become aware of potential gender bias and suggest approaches and present aspirational standard to mitigate these.

DISCUSSION

Conducting the CHIS assessment was a formative step in the design and development of the CHIS in these 17 countries. The assessment results show that the development and implementation of a CHIS is still largely nascent. In most countries the CHIS is in planning or conceptual phase. Still, from the assessment results we are able to gleam several key insights.

First, the qualitative and quantitative data from the assessment present clearly that system developers have rarely if ever directly engage with the community health workers or key community stakeholders prior to the assessment. Designing with the user and understanding the existing ecosystems and context of system use, as principles for digital development, are understood to be integral for system use and ultimately sustainability. 20 In fact, several countries voiced surprise at the type and quantity of activities the community were already performing. From the post assessment country feedback, it became clear that this assessment provided a unique opportunity to bring community stakeholders and CHWs directly into the conversation around CHIS design and development. It was also noted that the assessment would ideally be conducted periodically to keep system developers engaged with feedback from CHW and community stakeholders.

Data feedback to CHWs and community stakeholder is also understood to be critically important 21, 22, and largely lacking especially to community stakeholders. Technology solutions and CHIS implementers should explore ways to increasingly automated, digital and manual, analogue feedback down to CHW and community stakeholders. However, the cost of data flow both up into the CHIS and then delivering feedback down to the community actors is a major concern.

The data shows that these governments are severely under resourced to support robust community health information systems. This fact is at least partially caused by and promotes the reality of siloed, unconnected, and program specific community based mhealth and support tools. 7 There is clearly a need for governments and implementers to invest from domestic resources and to advocate donors to refocus their funding from siloed mHealth projects to core CHIS funding. Additionally, governments will need to develop and enforce national health system strengthening policies that require mHealth projects to interoperate with the CHIS residing in the national HMIS. 18, 23

Complex and expensive interoperability layers between mHealth apps and the CHIS will be unsustainable to Ministries of Health given their financial constraints. Governments and donors could look to software vendors which have adopted shared, open technical standards such as ADX, HL7, and FHIR to lessen the financial and technical hurdles to achieving interoperability between mHealth tools and the CHIS. 17, 19 Additionally, the selection by ministries and implementing partners of free and open source mHealth tools that maintain well documented code base and robust application programing interface (API) have been sighted as a means to place less reliance on a single software vendor. 24 Emerging more recently, the adoption of the use of digital global public goods more soundly ensures that long-term financial and technical support and development of mHealth tools that will be sustainable long term. 11, 17

Ministries also reported in both the qualitative and quantitative assessment data that SOP are still lacking and general governance over the CHIS is a persistent problem. Notably, Cameroon indicated that many SOPs had been developed but were not considered important or adhered to. They noted that the importance of SOPs was a surprise from the assessment. Also, in Senegal the exercise allowed to inform all stakeholders of the ongoing development of national HMIS SOPs and to consider the relevance of involving representatives from the Community Health Division in the process. Conversely, Ghana indicated that they have extensively developed and adherence to SOPs for CHW reporting but specified in their assessment that SOPs had not yet been developed for M&E units to utilize community level data for program outcome and impact analysis.

Infrastructure, access to cell phones, reliable electrical power supply, and mobile network, clearly continue to be a principal limitation to community information systems. It has been cited that more than 95% of the global population have access to mobile phones, therefor, mHealth specifically is increasingly utilized to support CHW data collection, decision support, alerts and reminders, and information access. 3 This assessment seems to suggest gaps in prior applicability of mHealth studies by looking more broadly at infrastructure and financial constraints to using mHealth. Governments, telecommunication providers, and donors will continue to have to develop the infrastructure and lower the costs of mobile data to move away from slow, error prone paper-based community reporting.

Finally, the assessment results and participation in the workshop show that general appetite for a government owned CHIS that reflects all community data integrated into the national HMIS is strong. The data indicated that the majority of countries were already capturing community data into the HMIS. This fact reinforces the mainstream appreciation that ministries need to include disaggregated community data in the HMIS and retain oversight of CHW reporting systems and tools.

In sum, the feedback from those who had participated did not only relate to the appropriateness of the tool for the objective of the assessment, but also to the way the tool informed about how a system should function, as well as how the process of involving all stakeholders created a more unified view of the system and brought stakeholders closer together.

Acknowledgements

The authors would like to thank the Health Division of UNICEF West and Central Africa, Global Fund, and the Health Data Collaborative for their contributions and support that made this research possible.

Disclaimer: The views expressed in the submitted article are the authors’ own and not an official position of the University of Oslo, UNICEF, or any other organizations.

Funding: Development of the CHIS Guidelines and the CHIS Academy was provided by the Health Data Collaborative via Global Fund. The CHIS Assessment in 17 West and Central African countries was provided by UNICEF. No support was provided for the writing of the article itself.

Competing interests: The authors completed the Unified Competing Interest form at http://www.icmje.org/coi_disclosure.pdf (available upon request from the corresponding author), and declare no conflicts of interest.

Correspondence to: Scott Russpatrick Thorvald Meyers Gate 34A Oslo Norway 0555 [email protected]